Not Exactly Omni – A look at Human Listener Directivity

The article is the results of a study on human listener directivity. The mic was place inside the ear canal and measurements were taken.

A recent Syn-Aud-Con Listserv thread brought up the subject of human listener directivity. I thought that this might be a good time to revisit some earlier published work on the subject, and supplement it with some of the current applications of this information.

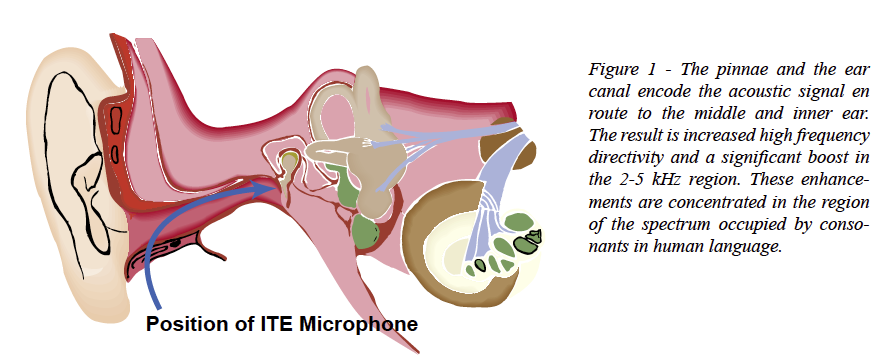

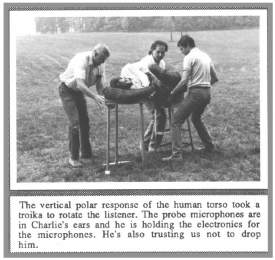

Human polar responses were gathered during the Syn-Aud-Con “3-L” Workshop held in the summer of 1988. The workshop was conducted to research the  interactions between the Listener, the Loudspeaker, and the Listening room. This was a productive time in audio history, as the early TEF machines were putting time domain measurement methods into the hands of an increasing number of audio practitioners. The human polar measurement procedure was carried out on Charlie Bilello. The data were gathered using an In-The-Ear (ITE) miking technique that places a small probe microphone in the pressure zone of the eardrum (Figure 1). This allows the acoustic signal that reaches the eardrum to be measured, and the transfer function to be determined. At this point in the listening process, the acoustic signal has already been encoded by

interactions between the Listener, the Loudspeaker, and the Listening room. This was a productive time in audio history, as the early TEF machines were putting time domain measurement methods into the hands of an increasing number of audio practitioners. The human polar measurement procedure was carried out on Charlie Bilello. The data were gathered using an In-The-Ear (ITE) miking technique that places a small probe microphone in the pressure zone of the eardrum (Figure 1). This allows the acoustic signal that reaches the eardrum to be measured, and the transfer function to be determined. At this point in the listening process, the acoustic signal has already been encoded by

Position of ITE Microphone

- 1. The Pinnae Response

- 2. The Ear Canal Resonance

- 3. Reflections from the head and torso

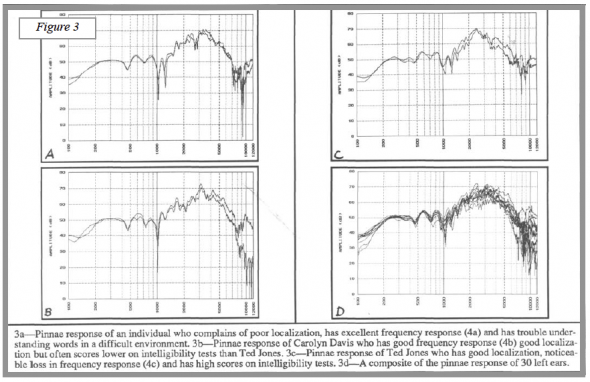

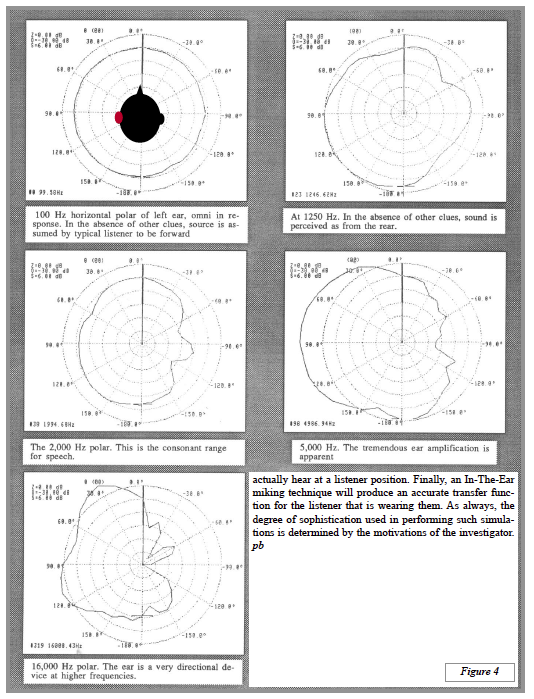

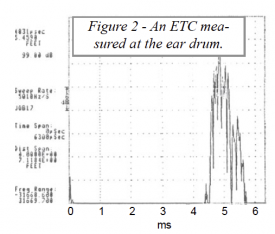

This encoding makes a human polar response bear little resemblance to that of the omnidirectional measurement microphones that we often use to measure rooms. The combined effect of these factors produce the Head-Related-Transfer Function or HRTF. Figure 2 shows an ETC measured at the eardrum. Figure 3 shows the “pinnae response” of a number of subjects. The human polars are shown in Figure 4.

Advanced acoustical modeling programs use the HRTF to determine how a human listener might perceive sound at a particular point in an auditorium. By placing a “probe” at a specific listener position, and selecting a loudspeaker as a source, the room’s impulse response can be predicted using image-source and ray tracing algorithms. This impulse response can then be convolved with the HRTF to consider the effect of the human auditory system on the sound. Finally, this resultant impulse response can be convolved with dry program material to “listen” to the sound from the loudspeaker/room at the seat being investigated. Auralization is quickly becoming a mainstream tool used by room and sound system designers.

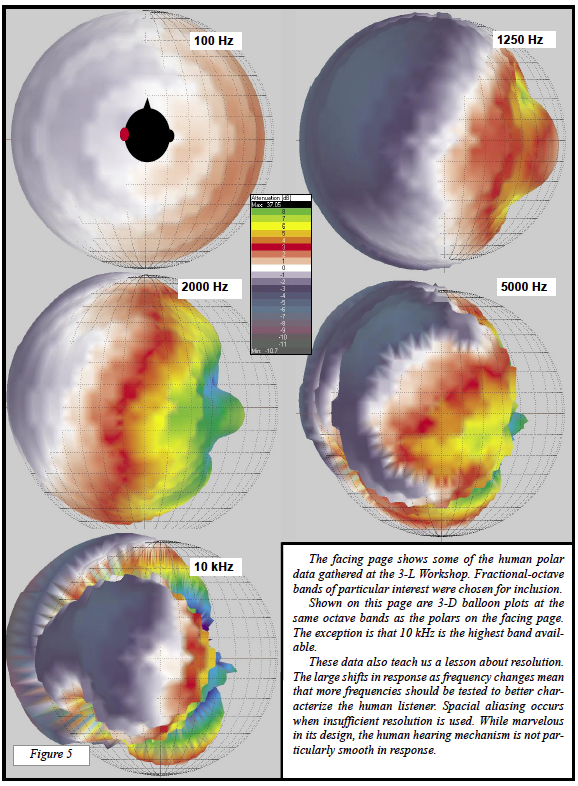

Figure 5 shows the HRTF of a Kemar™ dummy head. The plots were made using the EARS™ module of the EASE™ design suite.

The measurements show clearly what is arriving at the ear drum. As revealing as these data are, they do not include the psycho-acoustic characteristics of our auditory system. The Haas and similar effects still do not show up on our analyzers, but understanding the time and frequency intricacies of the auditory system is still of vital importance to sound people.

To apply this information to modeling and measuring practices, we can conclude that omnidirectional receivers (or measurement microphones) can be used to document what is physically happening at a point in space. A generic “stereo” receiver (or measurement microphone) can be used to emulate the human listener to the first approximation. A “dummy head” produces a more accurate representation of what a person might actually hear at a listener position. Finally, an In-The-Ear miking technique will produce an accurate transfer function for the listener that is wearing them. As always, the degree of sophistication used in performing such simulations is determined by the motivations of the investigator. pb