Compression Driver Specifications

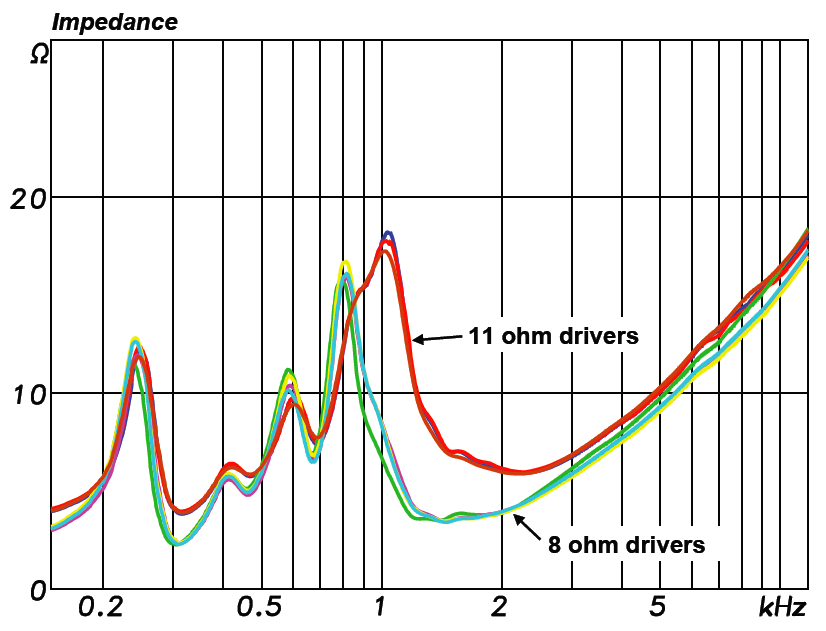

A recent compression driver measurement project afforded the opportunity to test two concepts. The driver line-up was (3) 11 ohm drivers and (4) 8 ohm drivers. These are 1-inch exit devices that would be typically used stand-alone for paging applications or as the midrange device in a 3-way full range loudspeaker. These are “rated” impedance values. The measured impedance of the line-up are shown in Figure 1.

Frequency Magnitude Tolerance

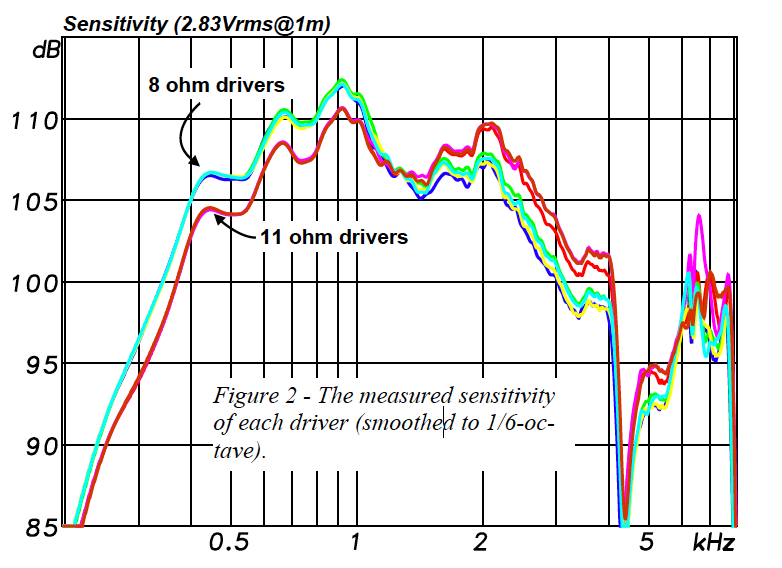

First, I measured the axial transfer function of each driver mounted on the same horn to determine unit-to-unit consistency. The frequency response magnitudes are overlaid in Figure 2. All devices were minimum phase, as is typical for individual transducers.

Can we trust that each like model that comes off the assembly line is the same? Each group of drivers (8 ohms and 11 ohms) are within a 1 dB tolerance – adequate for array models and design estimates. I should note that one of the 8 ohm drivers differed by 5dB, but re-seating the diaphragm corrected the problem. Hopefully the quality control of the loudspeaker manufacturer would catch this.

Impedance/Sensitivity Relationship

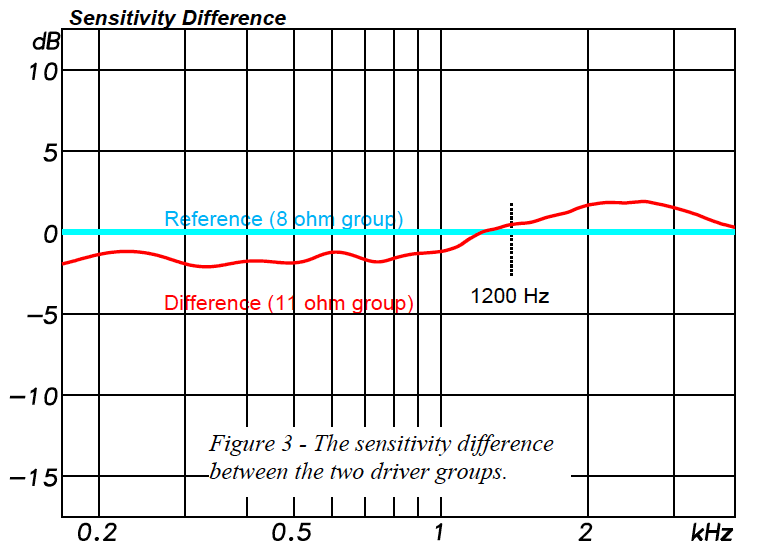

The second (and main) objective was to determine the effect of the driver’s electrical impedance on the sensitivity. All other factors being equal, the sensitivity difference based on impedance should be

Z = 10log(11/8) = 1.4 dB

Theory says that the 8 ohm drivers should have the highest sensitivity because they will draw more current with the same applied voltage.

To evaluate this I did the following:

1. Averaged the response of the (4) 8 ohm drivers.

2. Averaged the response of the (3) 11 ohm drivers.

3. Divide the magnitude responses using the 8 ohm average as the reference response.

Figure 3 shows how the group of 11 ohm drivers differs from the group of 8 ohm drivers. As stated above, the ideal and expected difference would be a straight line at -1.4 dB.

The approximate expected result happened below about 1200 Hz. Above 1200 Hz the 11 ohm drivers surprisingly had a slightly higher sensitivity than the 8 ohm drivers. There can be several reasons for this, including physical issues within the drivers themselves. The objective here was only to make the comparison, not to troubleshoot the results.

Maximum Vrms

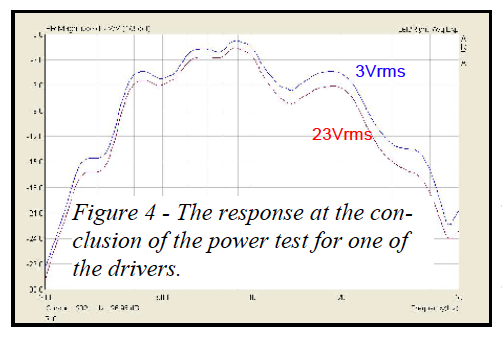

The last test was to measure the maximum Vrms for each driver, using the method described in Loudspeaker Toaster (SynAudCon Newsletter Winter – 2006). The application of 23 Vrms produced a 3dB change in the transfer function magnitude at 5 kHz (Figure 4).

Conclusions

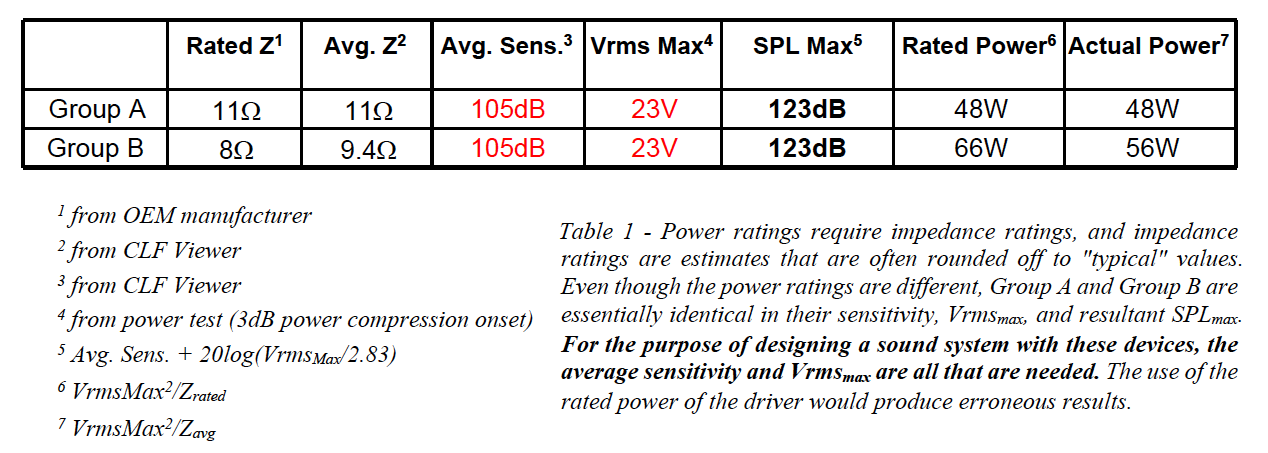

Table 1 summarizes the relevant specifications of the driver line-up. The relationship found between sensitivity and impedance was not as expected. Given that these drivers are driven from voltage sources (modern power amplifiers) the voltage-based metrics tell the story. This reinforces the assertion made by Charlie Hughes in his article (this issue) that loudspeaker sensitivity should be based on the applied voltage (2.83 Vrms), not the applied power. pb